Creating a new field of engineering using a self-learning, self-evolving system - The development of applications useful in the real world is highly expected.

Masashi Furukawa, Doctor of Engineering,

Professor of the Graduate School of Information Science and Technology, Hokkaido University,

Division of Synergetic Information Science's Research Group of Complex Systems Engineering

Striving to elucidate the rules and mechanisms present in a complex world through an engineering approach

Recently, the term "complex" has been used in various contexts. How does complex systems engineering relate to our daily lives?

Dr. Furukawa: If we say, "The world where we live is complex," most people would respond, "Exactly." Modern society is nothing less than chaotic, with problems defying conventional wisdom and methodology appearing everywhere in the world. The Research Group of Complex Systems Engineering aims to establish new sciences and engineering techniques by addressing such problems from four viewpoints - autonomous systems engineering, harmonious systems engineering, formative systems engineering and chaotic systems engineering. In autonomous systems engineering, we are studying a mechanism in which natural systems and systems of artifacts learn and evolve while controlling themselves. System components cognize their surroundings in their respective environments, make judgments, determine their actions and act. The results of their actions interrelate with other components and affect the components per se, causing the entire system, including the components, to emerge as something new and evolve. This is an ordinary occurrence in the natural world, but the same thing happens in the world of artifacts. For example, the Internet is a man-made artifact, but its components, i.e. Websites and users, act in a self-serving and autonomous manner, leading the evolution and development of the network as a whole. Complex systems like this have something very similar to the laws of nature. The theme of the Laboratory of Autonomous Systems Engineering is to elucidate new theories and methodologies and use them to facilitate the development of self-learning and self-evolving computers, robots and so forth.

Analogy of Web networks to a human brain - Global Scale Brain

What kind of research programs are being pursued at the Laboratory of Autonomous Systems Engineering?

Dr. Furukawa: Currently, three major projects are under way. One aims to study the analyses and applications of complex networks. A network is comprised of nodes (points) and links connecting nodes. A complicated network has hundreds of millions of nodes to billions of nodes, of which the individual relations and behaviors differ. For example, the human brain has more than 14 billion nodes (brain neurons) and the total number of link combinations amounts to 109-10 (10 to the 9th or 10th power). Furthermore, the World Wide Web has more than 50 billion nodes, in the form of Websites and homepages, which are interconnected by countless links. It is impossible to investigate the properties of a gigantic, complicated network like this one by enumerating all relations. Therefore, statistical methods came to be employed, followed by the appearance of theories such as the small world and scale-free networks (Ex. 1).

This project regards the World Wide Web as the Global Scale Brain and is striving to shed light on the characteristics of the network as well as the intelligence the network produces by using graph theory, evolving and learning agent technology, self-organization mapping and other methodologies. Among other aspects, we have focused on human behavior (behavioral patterns) and have made considerable achievements in software development geared to learn user behavior, leading to the establishment of a university-launched venture business providing trendsetting Web services.

"Anibot," an artificial life form that keeps evolving on the virtual earth (inside computers)

Dr.Furukawa: The second project is "Anibot" (abbreviation for Animation Robot). I once engaged in research on 3D-CAD and intelligent CAD and began the development of Anibot technology by incorporating artificial life technology, agent technology and learning theory. Specifically, a virtual space that has the same physical laws as in the real world is created and artificial life (robots) is placed there. The robots then learn how to move around and keep evolving (Figs.1 & 2). Resistance, such as gravity, water and air, is reproduced in the space, where robots equipped with a sensor, actuator and decision-making functions learn and evolve based on genetic algorithms (GA) while cognizing their surrounding environments. In conventional animation, an animator determines every move and draws pictures or programs into a computer. For Anibot, however, characters themselves determine how to move around. All the animator has to do is to give instructions such as "Walk from here to there." In the future, we would like to develop software with which, even without programming skills, a virtual character of your choice can autonomously develop stories.

It is expected to be applied to various fields, not just the entertainment field, isn't it?

Dr.Furukawa: Yes. Currently, we determine the parameters for such space based on the environment where the earth's physical laws apply, such as gravity, water, air and other types of resistance. If we change environmental variables, however, it will become possible to conduct simulation based on various laws of physics. Students engaged in this project are also proceeding with research activities very enthusiastically.

The world's fastest-level hyper scale optimization - performing one hundred thousand to one million combinations of calculations

Learning functions and artificial life remind us of robots from science fiction, but autonomous systems engineering is a technology used in our immediate surroundings, isn't it?

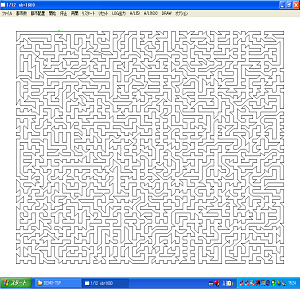

Dr.Furukawa: Exactly. The third project, "hyper scale optimization," is used in our immediate surroundings. Optimization means finding the most suitable combination under certain conditions using computers. It is like finding the shortest route connecting 10 cities, for example. There are 300,000 ways of connecting 10 cities and the most efficient route will be found through the comparison of all those routes. I started research 15 years ago and have engaged myself in applied development, through industry-university cooperation, based on a GA scheduler, which achieves optimization using GA. Since this is very helpful for enhancing the efficiency of physical distribution systems, it has been actually used at manufacturers' delivery centers, in the transportation industry and in other areas. It is now possible for computers to calculate, based on optimization theory, what people have scheduled based on their empirical rules. In addition, it is now obvious that GA-based optimization has its own limits. For example, it cannot perform optimization across as many as 100,000 to one million combinations for encryption or large-scale scheduling of factory tasks. Therefore, we developed the Local Clustering Organization (LCO) of algorithms by combining and further evolving GA and self-organizing learning (Ex. 2 / Fig. 3). This has significantly reduced computation time. We have an optimization example with a salesperson covering 30,000 cities, and it was verified that the optimization can be done dramatically faster and more accurately compared with other methods. Since the LCO is a general solution applicable to various fields, not just physical distribution, we will continue our research efforts and materialize more practical systems.

Explanation

Explanation 1: "Small world" and "scale-free"

"Small world"

A "small world" network is a concept advocated by Duncan J. Watts and Steven H. Strogatz, and indicates a property of networks that only goes through a few relay nodes to go from one node to another arbitrary one.

"Scale-free"

A "scale-free" network refers to the state in which only a few of numerous nodes are linked and vast amounts of links are concentrated on those few nodes. A group led by Albert-László Barabási investigated the number of links on Websites and found that the distribution of Websites having numerous links and those with only a few links is an example of power distribution (also referred to as the long tail). There are many cases of power distribution in the natural world and the World Wide Web is believed to have properties applicable to the laws of nature.

Explanation 2 (Fig. 3): Local Clustering Organization (LCO)

The LCO algorithm is based on the Riccati equation with which global improvement is materialized through repeated randomly selected local improvements. Fig. 3 shows the optimization of a route covering 4,900 points using the LCO. Calculation requires only several tens of seconds.